Why Our LLM Reviews Matter

Our rigorous testing methodology goes beyond marketing claims to deliver honest, data-driven insights about Large Language Model performance across real-world scenarios.

Comprehensive Benchmarking

We conduct extensive performance testing across multiple dimensions including accuracy, speed, context handling, and specialized task completion. Our benchmark suite includes coding challenges, creative writing, analytical reasoning, and domain-specific knowledge tests. Each model undergoes identical testing protocols to ensure fair comparisons and reliable results.

Cost-Effectiveness Analysis

Beyond raw performance metrics, we analyze the true cost of implementation including API pricing, infrastructure requirements, and operational overhead. Our reviews include detailed ROI calculations for different use cases, helping you understand the total cost of ownership for each LLM solution across various deployment scenarios.

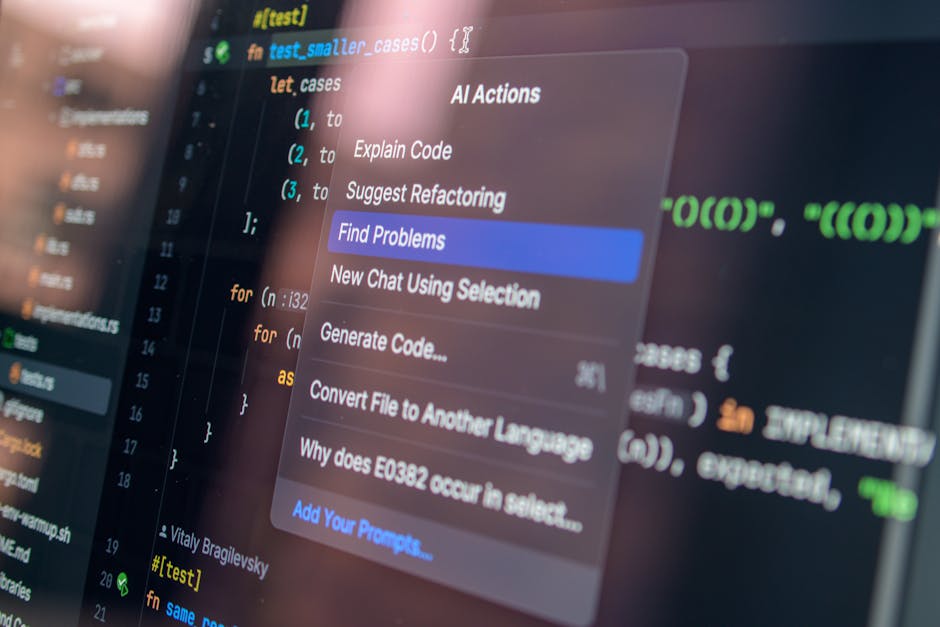

Real-World Application Testing

Our testing methodology focuses on practical business applications rather than theoretical capabilities. We evaluate LLMs across customer service scenarios, content creation workflows, code generation tasks, and data analysis challenges. This approach ensures our reviews reflect actual performance in production environments where businesses deploy these models.

Integration Assessment

We thoroughly evaluate how well each LLM integrates with existing business systems and popular development frameworks. Our reviews cover API reliability, documentation quality, SDK availability, and compatibility with major cloud platforms. This comprehensive integration analysis helps teams understand implementation complexity and potential technical challenges before commitment.